Everything you need to know about NeRFs

Powered by advanced machine learning, Neural Radiance Fields (NeRFs) are capable of generating impressive 3D digital twins from 2D images of real world objects and scenery. In our latest ‘Nerds that NeRF’ webinar, disguise’s VP of Virtual Production, Addy Ghani, gathered leaders from Studio Lab, Nvidia and Volinga together to discuss exactly what NeRFs can offer film and television productions. Let’s explore five key takeaways from their discussion.

1. Create quickly and easily

“NeRFs are a new AI tool that understand the content of a 3D scene,” says Fernando Rivas-Manzaneque, co-founder for Volinga, the specialist media production suite for NeRFs. This goes beyond simple geometry, with the tool being able to understand and modify reflections and other environmental elements.

The most immediate advantage that NeRFs offer users is the ability to recreate real world objects and locations in a digital space. These generated images are accessible, adaptable and offer the ability to represent the subject from every viewpoint.

The NeRF creation process offers a significant advantage over previous processing options. Because so much of the generation process is led by artificial intelligence (AI), the user input required is minimal, and can be gathered incredibly quickly. One example from the webinar showed extensive 3D scenery of a picturesque alleyway in a European town. The details are exquisitely realised and photo-realistic - and were captured from a single walk through the real alley using a 360° camera.

Ghani added that users are “generating this 3D world and creating the viewpoints without the weight and complexity of a 3D mesh, which is really mind-boggling.”

2. Replicate global locations

Walks like these, through scenic towns and across sprawling mountain ranges, are what NeRFs were made for. According to Ian Messina, Director of Virtual Production at Studio Lab, “our goal is really to immerse the viewer, selling that we’re in a different world, a different moment in time.”

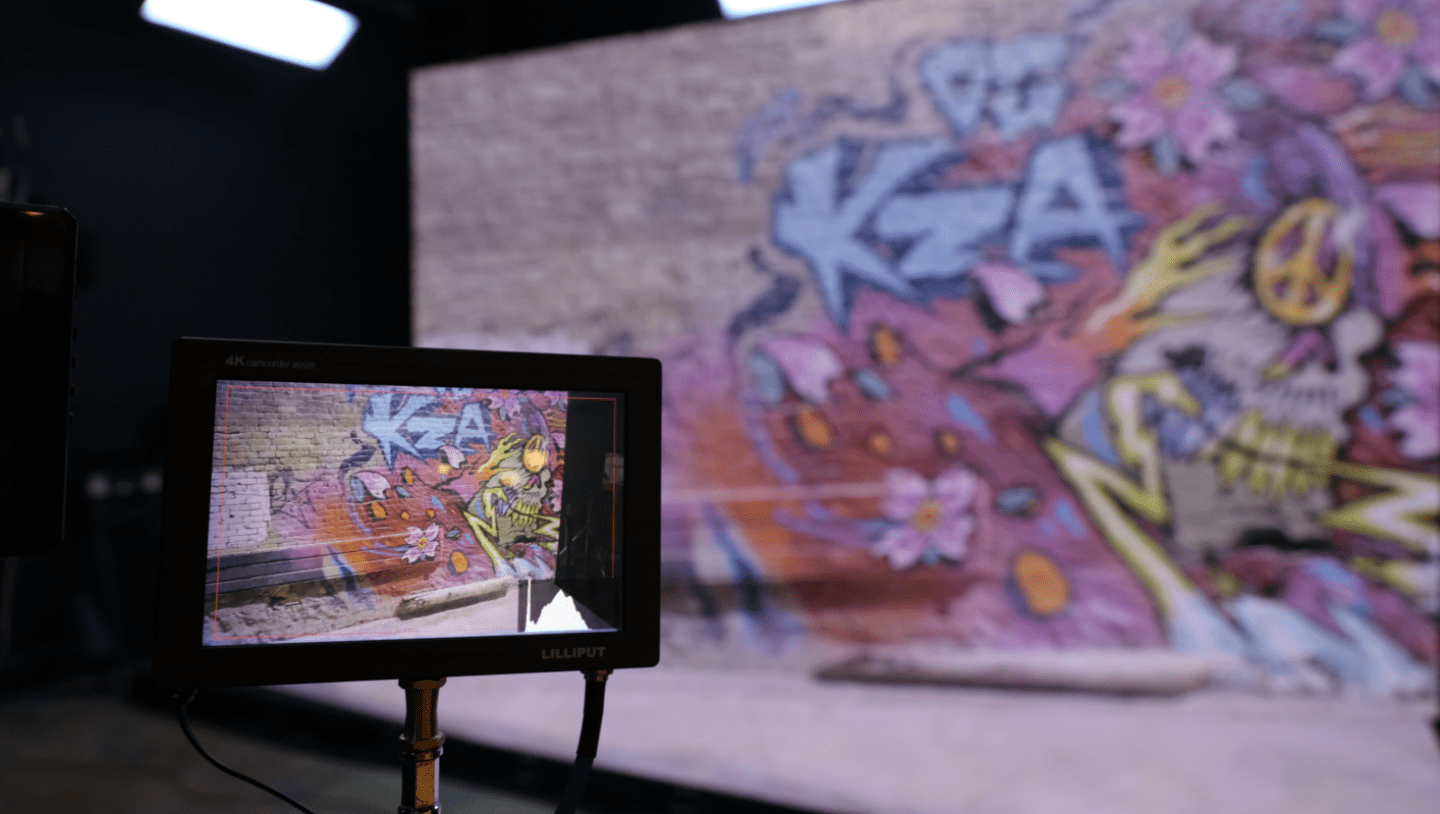

One of the primary attractions of virtual production has always been the ability to recreate far-off locales in a studio environment, saving on the time and expense of on-location shooting. But the challenge has always remained - how to best capture the footage that goes up on the LED screens back on set?

For Messina, this is a key advantage of NeRFs. “I’m really excited by the ability to have someone all the way across the world go and scan a location that we can bring back to our studio,” he says. The simple process means that an individual can send up a drone in the middle of the Himalayas and, in just a few minutes, upload the content to the platform and have a fully-realised scene that can be used on-set thousands of miles away.

3. Adapt real-world locations for your story

With content creation fuelled by AI technology, NeRFs offer unparalleled flexibility when building virtual production sets. Photogrammetry has so far been the standard for 3D modeling of spaces, but can present a lengthy process that is complicated by reflections, which often need to be carefully avoided, or edited out using CGI.

NeRFs use machine learning to identify issues like these and adjust them during the initial processing. Crewmembers’ legs caught in the bottom of a mirror are removed automatically, saving on unwanted post-production costs.

But this is just a small example of the power NeRFs have to adapt their 3D scenery. Large objects can be taken out of the set using the same principle. Ghani shared a street scene in which every car had been automatically removed, enabling productions to resimulate the street as needed.

“You can generate an alternate reality to give more use cases,” says Jason Schugardt, Senior Solutions Architect for NVIDIA. A crowded road becomes empty, ready for CGI to introduce whatever the script calls for - whether that’s creeping vines for a post-apocalyptic setting, or vintage cars for a period production.

4. Access bigger opportunities

NeRFs represent “an enabling technology that will disrupt different markets,” says Rivas-Manzaneque. “It’s going to lower the barrier for a lot of people to do a lot of things.”

One of the big reasons for this is the accessibility of the technology. All the technology you need to capture a NeRF is already in your pocket. Schugardt champions the latest iPhones as ‘an easy way in’. The quality of their in-built cameras means that it’s possible to create a high-quality NeRF using tools that are widely accessible to the public.

This means that NeRFs are already accessible to smaller productions. Ghani says “I think this is going to unlock opportunities for a lot of people that have never really touched 3D before, but can start anew here.”

5. Build towards a collaborative future

All of this leads to the question: what does the future of NeRFs look like? One thing is for certain: there is no lack of ideas on the matter. The Computer Vision and Pattern Recognition Conference (CVPR) recently had 175 papers presented on NeRFs alone.

Ghani believes we’re only scratching the surface on use cases for the technology. “Everyone from real estate businesses to Google are finding ways to make the most of this groundbreaking technology,” says Ghani. The panel even showed off generative AI combined with NeRF uses in which a 3D image created using a NeRF is adapted digitally using simple prompts - in this case turning a man into a bronze statue one moment, and Albert Einstein the next.

It’s clear that virtual production will play a big role in leading the industry into the future. “As an industry,” says Schugardt, “there’s so much innovation here, and I think that’s part of the power of everyone working on the same thing. I’d love for us not to take too long to find a standard and push that forward as well. I’d love the community to work together on forward-thinking about where we can go.”

NeRF quality and usability will certainly improve rapidly over the next few years, and, with that will come powerful use cases across multiple industries- from Media and Entertainment to autonomous driving, geolocation and mapping, and even AR and VR world building.

6. Deliver your NeRFs with disguise and Volinga

Volinga sits at the forefront of much of this groundbreaking work, developing the technology and exploring its potential while delivering impressive results. “This is the format we’ve been waiting for to apply all the knowledge we had in 2D images and generative images into a 3D world,” says Rivas-Manzaneque.

Volinga has integrated with disguise, allowing users of disguise RenderStream™ to deliver 3D worlds in minutes. Simply upload footage from any capture device (camera or high-spec camera phones) via the Volinga RenderStream™ plugin. Then, you can run the scene in real-time with little optimisation needed, cutting down on lengthy pre-production work.

Virtual Production